FLAC? ALAC? Dolby TrueHD? All three of these differ in detail, but work in a broadly similar way to deliver lossless compression. We’ll see how they do that shortly, but first, what’s the big deal about lossless compression?

Lossy compression

Digital audio consumes a lot of space. Consider the CD. It uses 16-bit sampling and a 44.1kHz sampling rate. Multiply these out (and don’t forget that there are two channels), and you quickly see that you need a bit more than 1.4 megabits per second for CD-quality audio. In storage terms, that comes to just very slightly more than 10MB per minute of music.*

These days that might not seem like much. But going back to the late 1990s when music downloads first started to become a thing, that was huge! With a dial-up modem, it could take many minutes, or even hours, to download an album.

Of course, computer engineers had long-since developed methods for compressing computer files so that they required less storage space. Unfortunately, they largely relied on identifying redundancies (eg. repetitions and other patterns) in the data. And music simply doesn’t have many of these kinds of redundancies.

Fortunately, some clever engineers, drawing on those who’d researched how humans perceived sound, had developed this thing called MP3. (This was not the first such thing, but it was the one that became ubiquitous, and persists to this day.) MP3 relied on science showing that our hearing simply does not capture a bunch of stuff. For example, loud sounds “mask” sounds in their vicinity. That is, sounds which are close in time to the loud sound – not just after, but also a little before – and more so if they are close in frequency, are not heard at all.

This science was turned into algorithms which identified and removed such “inaudible” elements from the music. The result was an astonishing shrinking of the sizes of music files, with minimal impact on sound quality.

I chose the word “minimal” carefully. There’s always argument about the audibility of this process. But it frequently fails to take into account important factors, such as the degree of compression.

With MP3, a bitrate of 128kbps (kilobits per second) became a kind of de facto standard. That’s an 11:1 compression from the original data. There’s no doubt that if something is compressed to 64kbps, you can hear it. With a good MP3 encoder and a bitrate of 192kbps, with most music you’re unlikely to be able to perceive any difference.

While most MP3 music is encoded at a constant rate, the variable bit rate option can provide higher quality by allocating more data to the bits where it’s most needed. Here’s an MP3 album with VBR. As you can see, the average bitrate is around 260kbps, or about 18.5% of the size of the original:

The quality of the encoder makes a difference. For some years I used harpsichord music as a test of MP3 quality, because it was the Achilles Heel of the format. Even at 320kbps – the highest bitrate available in the MP3 format, the effect was often obvious. But encoders got better, and the effect disappeared.

(The fact that MP3 is “lossy” misleads a lot of people into listening for the wrong things when assessing its quality. It loses elements of the music that the algorithm has been told are inaudible, although past a certain threshold it moves into eliminating things that are less audible, rather than allegedly inaudible. See for example the effect of the hard 16kHz filter in the graphic at the top of the page. But the real giveaway of poor MP3 encoding has little to do with traditional high-fidelity concerns of spaciousness, imaging, a sense of reality. Instead, it has more to do with a weird disconnectedness of sound, along with barely perceptible warbles and noises that are nothing like what happens in the analogue world.)

I am now writing in the middle of 2021. We no longer use dial-up modems, which provided Internet speeds counted in thousands of baud. The lowest NBN tier promises (delivers? Who’s to say) at least 25Mbps. That’s three orders of magnitude faster than those old dial-ups, and enough for more than fifteen uncompressed CD streams to run down the Internet pipe to your home.

So why not lossless? Sure, in extensive double-blind scientific testing, a particular high-quality lossy compression algorithm may be demonstrated to be aurally identical to uncompressed audio. But there’s still some doubt, and the fact that we can deliver lossless audio at low cost means, well, we should.

And we do.

Lossless audio

If music streaming were first to be introduced to the world in 2021, there’s a strong possibility that it wouldn’t be digitally compressed at all. After, there’s that fifteen uncompressed CD streams I mentioned a moment ago.

But history has this habit of happening. We got to 2021 from the late 1990s through a period where reducing the data rate of streaming, or downloading, music was still important, although not important enough to make us keep using 64kbps MP3. That’s where the clever engineers came in.

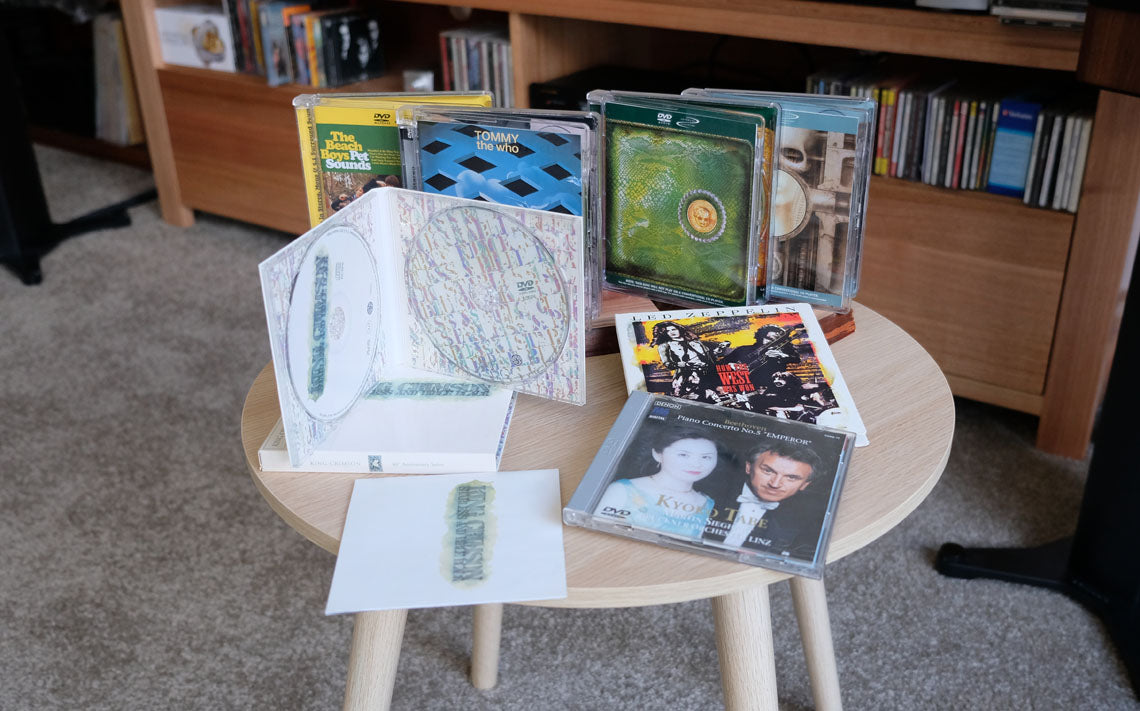

I think the first widespread public appearance of lossless compression of digital audio was in 2000 with appearance of the DVD Audio disc, although it may have been pipped by Monkey’s Audio. DVD-Audio was released at around the same time as the Super Audio CD. It managed to deliver incredibly high-resolution audio at up to 24 bits and 192kHz sampling in stereo, and up to 24-bit/96kHz audio in 5.1 channel surround sound.

The 24/192 could (barely) fit into the DVD limitation of a 10Mbps total data rate, but the 5.1 channels at 24/96 couldn’t. The DVD Forum chose something called Meridian Lossless Packing as a means of fitting in that data. (Yes, that’s the same Meridian that’s behind MQA, although the latter doesn’t seem to be quite as solidly founded as MLP.) DVD-Audio eventually (largely) faded away, but MLP lives on. Dolby Laboratories used it as the basis of what became Dolby TrueHD on Blu-ray.

A year after the appearance of the DVD-Audio format, xiph.org – the developer of the freeware (and better than MP3) lossy format Ogg Vorbis – released FLAC, the Free Lossless Audio Codec. This is likely the most widely used lossless audio compression system around, and it now seems to be supported by just about every major computing and digital audio platform.

Three years later, Apple released ALAC, the Apple Lossless Audio Codec. As is Apple’s wont, it remained propriety. Well, for a while. Seven years later Apple made ALAC open source and free.

How does lossless audio file compression work?

Since there aren’t many redundancies (repetitions, primarily) to be exploited in the data constituting digital audio, a very different approach had to be adopted for lossless – which is to say, bit perfect – digital audio compression.

At this point, I should note that I have no insider knowledge about these systems. This is simply my understanding gathered over a couple of decades, and not denied by any relevant players.

All three of these systems: MLP/Dolby TrueHD, FLAC and ALAC differ in detail but work along broadly similar lines.

What these lossless systems do is what I call “model and correct”.

Let’s say you have 16-bit audio, so the analogue waveform is mapped onto a 16-bit digital number scale, each number being a sample. It’s impossible to precisely predict what any given sample will be based on what has preceded it. But it possible to roughly predict it. If the wave form is going up at a particular rate, the next sample will likely be close to a simple extrapolation of the sequence before the sample. A more advanced system may take into account any “curve” in the preceding samples and employ that in its estimation of the next sample.

Here for example is sequence of samples from one channel of a real recording. You can see how from any set of three or four samples, you could approximately predict the next one:

So these compression systems all use a predictive algorithm. The nice thing about a predictive algorithm is that it can be built into the decoder, so it doesn’t need to be communicated. That saves space.

But one thing is clear: a predictive model is going to sound terrible. Even if a predicted sample or two is fairly close to what it should be, it would soon drift far away from the correct signal.

So, what the compressed audio stream mostly consists of is not the original signal, but a stream of corrections to the predictions. And here’s the cool thing about all this: since the predictions aren’t generally too terrible (mathematically, no matter how bad they’d sound), the corrections are only modestly sized. The original signal in our example was 16-bits, remember. Our corrections can generally be contained in 4, 6 or 8 bits, with perhaps the occasional 12 or even 16 bits required for extreme transients. If the average requirement for the correction stream is 8 bits, then that reduces the size of file or data stream by fifty percent.

Efficiency

Which is about how efficient FLAC is – typically between 40% and 60%. Here’s a 16-bit jazz album losslessly compressed to FLAC format. As you can see, these tracks average to around 760kbps, which means they were compressed to 54% of their original size.

I should clarify that. The efficiency depends on a lot of things. A lot of extreme transients will increase the file size, because the corrections will be bigger, requiring more bits to encode.

And completely unpredictable data cannot be compressed at all with a system that depends on at least some predictability. Unpredictable data goes by another name: noise.

Around ten years ago I wrote a piece for Sound+Image magazine in which I analysed the compression ratios for the main soundtrack on 171 Blu-ray discs. I found that for those with 5.1-channel 16-bit audio, Dolby TrueHD managed an impressive compression ratio of 33%. However, it seemed that with the 24-bit tracks, the last eight bits of 24-bit Dolby TrueHD could only be compressed to around 76%. Why? Because much of those least significant eight bits were noise. Read it here if you want to get deep into numbers and methodology.

(But I should note that the reason the 16-bit stuff managed a higher level of compression than is typical for stereo FLAC music is that with 5.1 channels, much of the surround stuff was very low in level and unchallenging, and thus highly compressible, while the LFE track only uses a small proportion of the available bandwidth.)

Conclusion

So, if you’ve wondered how lossless compression actually works, now you know. More importantly, this knowledge should convince you that it’s not snake oil. The decoder for your FLAC or ALAC music files will perfectly reconstruct the original PCM file, down to the very last bit.

* Just to be clear: B is a byte and b is a bit. MB is megabyte, Mb is megabit. There are 8 bits in a byte. It is conventional to talk about computer storage in bytes, and the rate of data required for digital audio in bits per second.